Vitis Accelerated Libraries

- Design Tools

- Vitis Unified Software Platform

- Vitis Libraries

Vitis Accelerated Libraries

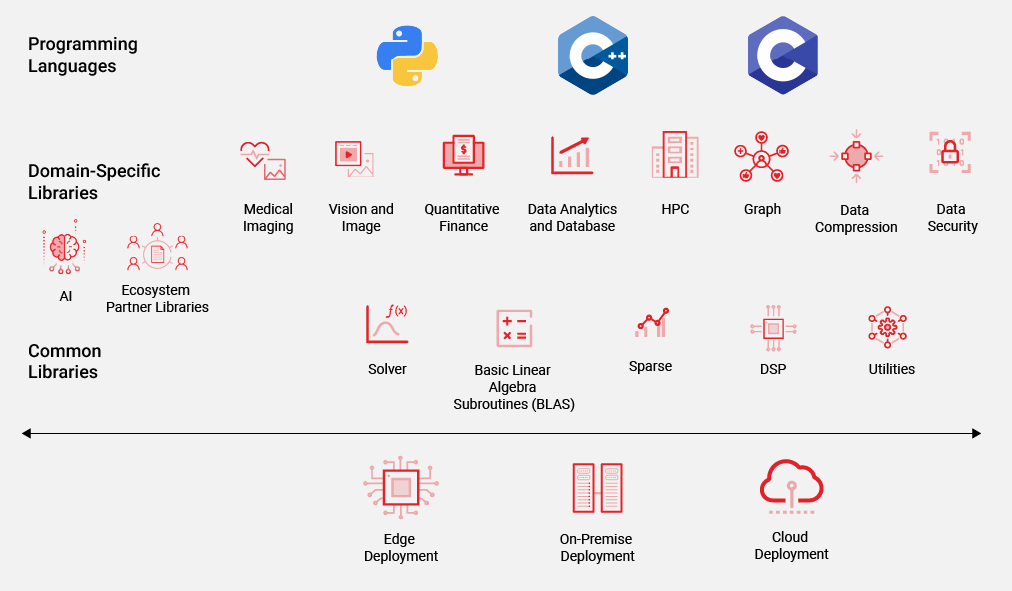

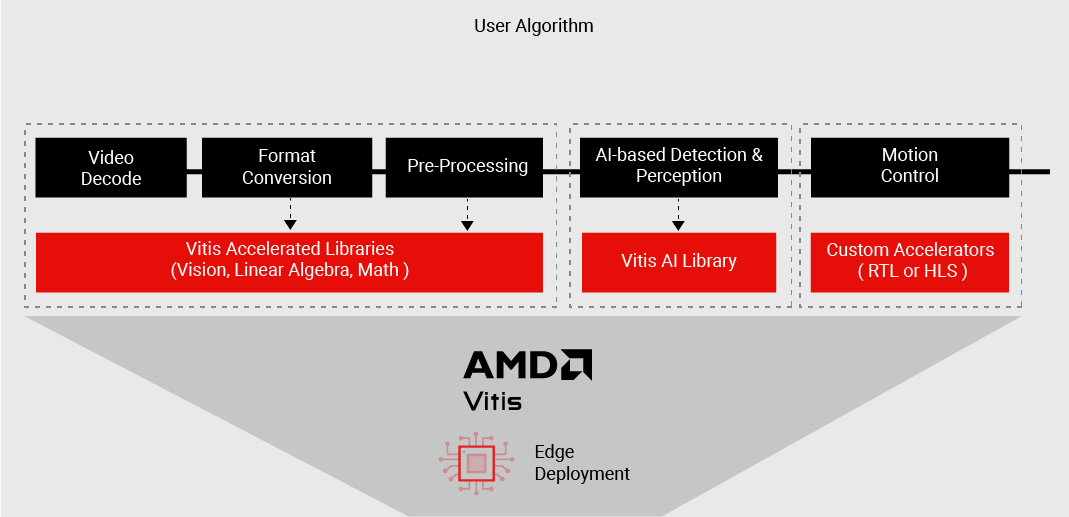

The Vitis™ unified software platform includes an extensive set of open-source, performance-optimized libraries that offer out-of-the-box acceleration with minimal to zero code changes to your existing applications.

- Common Vitis accelerated-libraries, including Solver, Basic Linear Algebra Subroutines (BLAS), Sparse, DSP, and Utilities, offer a set of core functionality for a wide range of diverse applications.

- Domain-specific Vitis accelerated libraries offer out-of-the-box acceleration for workloads like Vision and Image Codec Processing, Quantitative Finance, HPC, Graph, Database, Data Analytics, Data Compression, and more.

- Leverage the rich growing ecosystem of partner-accelerated libraries, framework plug-ins, and accelerated applications to hit the ground running and accelerate your path to production.

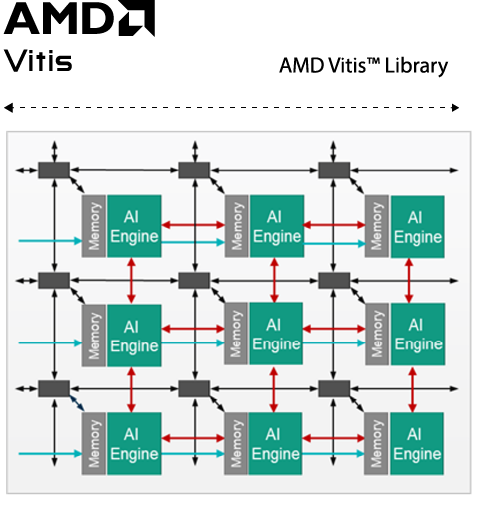

Vitis libraries now contain DSP, matrixes, and other functions that are optimized for implementation in the AI Engine portion of Versal™ devices.

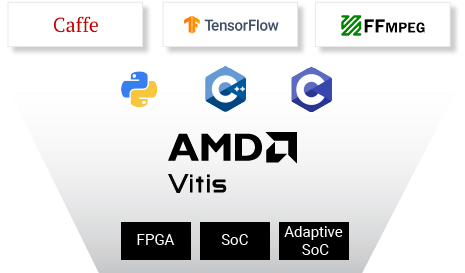

Use in Familiar Programming Languages

Use Vitis accelerated-libraries in commonly used programming languages that you know, like C/C++. Some of these libraries also include Python functions on Level 3, such as the Vitis BLAS library and Vitis Quantitative Finance library. By leveraging AMD platforms as an enabler in your applications, you can work at an application level and focus your core competencies on solving challenging problems in your domain—accelerating your time to insight and enabling faster innovation.

Whether you want to accelerate portions of your existing x86 host application code or want to develop accelerators for deployment on AMD embedded platforms, calling a Vitis accelerated-library API or kernel in your code offers the same level of abstraction as any other software library.

Scalable and Flexible

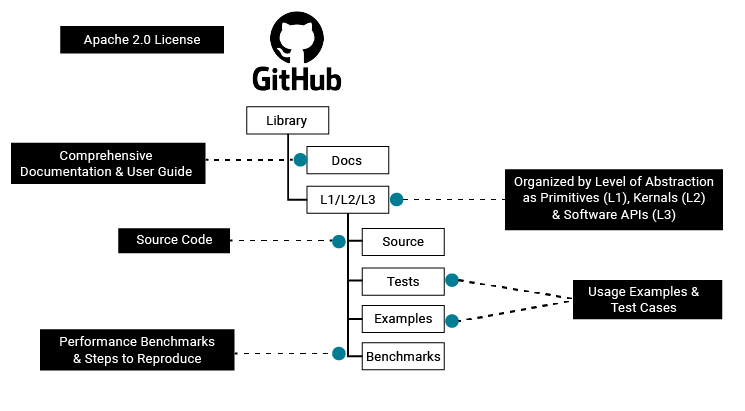

Vitis accelerated-libraries are accessible to all developers through GitHub and scalable across all AMD platforms. Develop your applications using these optimized libraries and seamlessly deploy across our platforms at the edge, on premise, or in the cloud without having to reimplement your accelerated application.

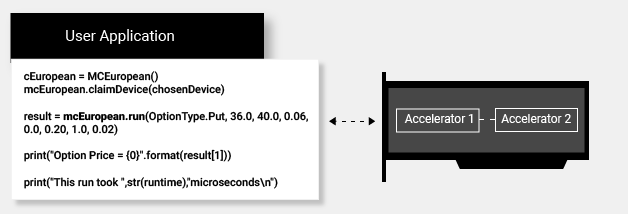

With the rapid prototyping and quick evaluation that AMD can bring to your applications, you can use these libraries as plug-and-play accelerators, called directly as APIs in the user application for workloads like Vision and Image Codec Processing, Quantitative Finance, HPC, Graph, Database, and Data Analytics, among others.

When designing custom accelerators for your application, use Vitis library functions as optimized algorithmic building blocks, modify them to suit your specific needs, or use them as references to completely design your own. Choose the flexibility you need!

Combine domain-specific Vitis libraries with pre-optimized deep learning models from the Vitis AI library or the Vitis AI development kit to accelerate your whole application and meet overall system-level functionality and performance goals.

Vitis Library Functions Optimized for Versal AI Engine

The AI Engines found in the Versal devices provide very high compute density for vector-based algorithms.

The following libraries have AI Engine additions:

- Vitis DSP Library

- Vitis Vision Library

- Vitis Solver Library

AI Engine code can be found under the "AIE" directories under L1 for AIE-only functions and under L2 for functions that are comprised of both AIE and PL code.

Note: For more details, please refer to the pages of each library.

Library File Organization

Typically, a Vitis library includes three levels (L1/L2/L3) of functions:

L1 Primitives |

|

L2 Kernels |

|

L3 Software APIs |

|

Vitis Graph Library (New)

High-level software interfaces written in C/C++ for the ease of use without any additional hardware configurations.

Vitis Blockchain Solution

First Vitis blockchain mining acceleration solution to power-efficient FPGA-based mining. Outperform the most efficient mining card with 2x mining performance/watt over GPUs.

Vitis DSP Library

Accelerating DSP functions on Versal™ AI Engines such as filters, FFt/iFFT, matrix multiply, widget API cast, widget real to complex, and DDS/Mixer.

Vitis Security Library

Achieve low-latency real-time performance for your security applications using AMD platforms.

Vitis AI Library

Accelerate AI inference using a set C++ and Python APIs and pre-optimized deep learning models to achieve highest inference performance for your applications.

Vitis Database Library

Accelerate data-intensive and compute-intensive algorithms in relational database management on AMD platforms.

Vitis Medical Imaging Library

Accelerate premium medical imaging on Versal™ devices with AI engines, while delivering frame rates upwards of 1,000 FPS with low latency.

Vitis BLAS Library

Accelerate common linear algebra operations in your algorithms using performance optimized BLAS routines.

Vitis Data Compression Library

Accelerate a broad range of data compression and decompression algorithms on AMD platforms.

Vitis Quantitative Finance Library

Accelerate a range of quantitative finance workloads such as options-pricing, modeling, trading, evaluation, and risk management.